I am using the docker-version (on linux) which runs very nice so far. Are the custom models working with the docker version, too, is yes, how?

Probably by putting the custom models somewhere in the right path and enabling them, but what is the right path and which way to enable it..?

This is how I did it to use the combined.pt model (which boosts my detection by factor 3) with docker:

This is my docker-compose.yml for SenseAI:

Code:

version: "3.3"

services:

deepstack:

image: codeproject/ai-server:latest

restart: unless-stopped

container_name: senseai

ports:

- "80:5000"

environment:

- VISION-SCENE=True

- VISION-FACE=True

- VISION-DETECTION=True

- CUDA_MODE=False

- MODE=Medium

- PROFILE=desktop_cpu

- Modules:TextSummary:Activate=False

- MODELS_DIR=/usr/share/CodeProject/SenseAI/models

volumes:

- /opt/senseai/data:/usr/share/CodeProject/SenseAI:rw

As you can see I've mapped the data path in my Linux VM to "/opt/senseai/data".

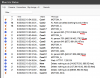

I've now created a folder "models" in /opt/senseai/data and put the "combine.pt" file there:

Remark: The "yolov5m.pt" is the default model and SenseAI will download it and place it also in the folder at start!

The "MODELS_DIR" environment variable points to the internally mapped path of SenseAI (in this case: /usr/share/CodeProject/SenseAI/models)

Then I fire up the docker with "docker-compose up -d".

It took me a while to learn that BI needs the model file as well ... so I've uploaded the "combined.pt" to "C:\

BlueIris\Models" in my BI-VM as well. Then:

Finally you need to enter the model in each camera:

That's it.