- Sep 4, 2017

- 194

- 325

This program analyzes motion in Blue Iris cameras in real-time using Artificial Intelligence. It notifies you if a human or an object of your choice is detected and can send alert images to Telegram.

It is based on DeepQuestAI, a free multipurpose AI that you can install on your own server (p.e. the server Blue Iris is running on).

I (GentlePumpkin) stepped back from development and VorlonCD and many others have added many amazing features to AI Tool since then:

Click here for information: AI Tool (by VorlonCD) Readme

Click here for the latest version: Download Folder

AI Tool is runs on Windows only, a Docker-only alternative developed by @neile is available here.

So how does the AI detection with Blue Iris work in general:

Interface Overview (Screenshots):

Setup:

The setup seems to be quite complicated, but actually it's quite simple and I'm just writing alot that should help you understand how the software works.

Overview:

1. Install DeepQuestAI

2. Configure Blue Iris

3. Configure the AI Software according to your setup and requirements

optional: Send alert images to Telegram

optional: Create a detection mask

optional: Run AI Tool as a Windows Service

While setting up everything, I'd like to ask you to mind AI Tool's only Term of Use:

use AI Tool only on your own property, don't use it to analyze the premises of your neighbors or public areas.

Now you can chose between two types of configuration. The recommended setup will receive motion detection alerts from a camera in Blue Iris and give them a flag in Blue Iris, if something was detected using AI.

The alternative (older) method will include setting up a duplicate camera for every camera you want to apply AI on. For each physical camera, you will have one hidden camera in BI that only detects motion and sends alert images to AI Tool, and a visible one, that get's triggered if the alert contains p.e. a human and therefore is confirmed by AI.

I hope that the AI Software works fine for you.

deprecated CMD Version (v0.1 - v0.6)

[/SPOILER]

It is based on DeepQuestAI, a free multipurpose AI that you can install on your own server (p.e. the server Blue Iris is running on).

I (GentlePumpkin) stepped back from development and VorlonCD and many others have added many amazing features to AI Tool since then:

Click here for information: AI Tool (by VorlonCD) Readme

Click here for the latest version: Download Folder

AI Tool is runs on Windows only, a Docker-only alternative developed by @neile is available here.

Version history:

For the latest updates (>v1.67) check out the AI Tool Changelog at Github

Older versions:

v1.67 flagging AI confirmed alerts replaces duplicate cams, Blue Iris receives detection summary

v1.65 stability

v1.64 fixed bug 10 and bug 11; contains bug 4

v1.63 fixed an error that would crash AiTool during start on some devices (bug b9); contains bug b4

v1.62 alert history filters added, configurable confidence limits, more information on irrelevant alerts, error information to Telegram, new stats: Timeline and alert confidence-frequency chart, decimal digits possible as cooldown time, fixed bug b8; contains bug b4

v1.56 now 9 instead of just 1 detection points are used to determine whether an object is covered by the privacy mask or not, fixed bug b7; contains bug b4

v1.55 bug b6 fixed; contains bug b4

v1.54 bug in the camera tab fixed; contains bug b4 and b6

v1.53 added privacy mask feature, clean redesign of the history tab, detected objects now can be marked with a rectangle around them (replacing the image cutout feature), AI Tool now can be resized/maximized, some more visual enhancements; contains bug b4

v1.43 BIG upgrade: introducing user interface, running in background, statistics etc; still contains bug b4 and b5

v0.6: fixed bug b3; change regarding b2: program will no longer try to upload an image to Telegram again because retrying to upload does not help to resolve the problem; contains b4

v0.5: added the possibility to call multiple trigger urls; contains bug b3, b4

v0.4: like v0.3, fixed bug b2; contains bug b4

v0.3: added Telegram image notifications (optional); contains bug b2, b4

v0.2: like v0.1, fixed the bug b1

v0.1: first release; contains bug b1

Known bugs:

b11: Outdated entries in the history list would not disappear, fixed since v1.64.

b10: The window content of AiTool did not resize. Occured in v1.63, fixed in v1.64.

b9: AiTool would crash during start on some devices, displaying an error message "out of range error... index = 0". Occured in v1.56 and v1.62, fixed since v1.63.

b8: Updating the input path only took effect after restarting AiTool. Occured in v1.43 to v1.56, fixed since v1.62.

b7: If an alert is analyzed while editing the camera settings, the unsaved changed disappear. Fixed since v1.56.

b6: sometimes the log says the resolution of the camera maks .png is too small although it isn't. Fixed since v1.55.

b5: the image cutout feature (stores cutouts containing the detected elements in 'Output Path' if specified) is 100% buggy as I haven't found time to get to the bottom of it yet. As long as no 'Output Path' is specified, this feature is disabled anyways.

b4: Telegram image upload sometimes fails. This probably has something to do with Telegrams' anti-spam measures. Not fixed yet.

b3: If no trigger url is specified, the program crashes. Occured in v0.5, fixed since v0.6.

b2: Sometimes Telegram is not reachable, which caused the program to crash. Starting from v0.4 the program will then try to upload the alert photo again. If that does not work, the upload will be skipped. Annoyingly, the problem that Telegram uploads sometimes fail remains. Occured in v0.3.

b1: When after the first analysis more images were found and analyzed, the program would not delete them. Occured in v0.1, fixed since v0.2.

For the latest updates (>v1.67) check out the AI Tool Changelog at Github

Older versions:

v1.67 flagging AI confirmed alerts replaces duplicate cams, Blue Iris receives detection summary

v1.65 stability

v1.64 fixed bug 10 and bug 11; contains bug 4

v1.63 fixed an error that would crash AiTool during start on some devices (bug b9); contains bug b4

v1.62 alert history filters added, configurable confidence limits, more information on irrelevant alerts, error information to Telegram, new stats: Timeline and alert confidence-frequency chart, decimal digits possible as cooldown time, fixed bug b8; contains bug b4

v1.56 now 9 instead of just 1 detection points are used to determine whether an object is covered by the privacy mask or not, fixed bug b7; contains bug b4

v1.55 bug b6 fixed; contains bug b4

v1.54 bug in the camera tab fixed; contains bug b4 and b6

v1.53 added privacy mask feature, clean redesign of the history tab, detected objects now can be marked with a rectangle around them (replacing the image cutout feature), AI Tool now can be resized/maximized, some more visual enhancements; contains bug b4

v1.43 BIG upgrade: introducing user interface, running in background, statistics etc; still contains bug b4 and b5

v0.6: fixed bug b3; change regarding b2: program will no longer try to upload an image to Telegram again because retrying to upload does not help to resolve the problem; contains b4

v0.5: added the possibility to call multiple trigger urls; contains bug b3, b4

v0.4: like v0.3, fixed bug b2; contains bug b4

v0.3: added Telegram image notifications (optional); contains bug b2, b4

v0.2: like v0.1, fixed the bug b1

v0.1: first release; contains bug b1

Known bugs:

b11: Outdated entries in the history list would not disappear, fixed since v1.64.

b10: The window content of AiTool did not resize. Occured in v1.63, fixed in v1.64.

b9: AiTool would crash during start on some devices, displaying an error message "out of range error... index = 0". Occured in v1.56 and v1.62, fixed since v1.63.

b8: Updating the input path only took effect after restarting AiTool. Occured in v1.43 to v1.56, fixed since v1.62.

b7: If an alert is analyzed while editing the camera settings, the unsaved changed disappear. Fixed since v1.56.

b6: sometimes the log says the resolution of the camera maks .png is too small although it isn't. Fixed since v1.55.

b5: the image cutout feature (stores cutouts containing the detected elements in 'Output Path' if specified) is 100% buggy as I haven't found time to get to the bottom of it yet. As long as no 'Output Path' is specified, this feature is disabled anyways.

b4: Telegram image upload sometimes fails. This probably has something to do with Telegrams' anti-spam measures. Not fixed yet.

b3: If no trigger url is specified, the program crashes. Occured in v0.5, fixed since v0.6.

b2: Sometimes Telegram is not reachable, which caused the program to crash. Starting from v0.4 the program will then try to upload the alert photo again. If that does not work, the upload will be skipped. Annoyingly, the problem that Telegram uploads sometimes fail remains. Occured in v0.3.

b1: When after the first analysis more images were found and analyzed, the program would not delete them. Occured in v0.1, fixed since v0.2.

The changelog is now on Github: Commits · VorlonCD/bi-aidetection

Previously:

Previously:

- v1.65

- substantially enhanced stability and speed of image anaylsis

- send alert images to multiple Telegram accounts

- enhanced history list loading speed

- fixed code that detects image loading errors

- upload image to Telegram if an error occurs

- added button to open log file from settings page

- Added milliseconds to log time when 'log everything' is checked

- futher enhancements of the log

- several bugfixes

since v1.56

I spent a lot of time to implement the following features and to ensure that the latest AiTool release runs reliably:

I spent a lot of time to implement the following features and to ensure that the latest AiTool release runs reliably:

- Quickly browse the alert history by filtering which entries are shown (specific camera, only relevant alerts, only irrelevant alerts).

- Set optional confidence limits so that an alert is only triggered if p.e. AiTool is more than 80% sure.

- If only irrelevant objects were detected in an image, the History tab now actually shows what was detected, where and how certain AiTool is. Irrelevant detections get a gray rectangle, relevant detections a red one.

- The cooldown now can be a decimal digit, so p.e. "1.5" gives you 1:30 minutes of cooldown time.

- Added a detection Timeline in the Stats tab. Give it a few days to be filled with data and you can use it as a reference to optimize your inputting motion detection etc.

- Below the Timeline is a chart that shown how often AiTool is how confident about it's detections. The green line shows the relevant detections and the yellow line the irrelevant detections.

- Errors and warning now will automatically be sent to your Telegram account if you added one.

- optimized Log

- Alot of under the hood work that enhances speed and stability (hopefully for everyone

)

)

Key features:- quickly analyze images stored in an input folder using DeepQuestAI for selected objects *and humans

- call one or multiple trigger urls if a specified object is found

- send alert images to Telegram using a bot (optional)

- it can be configured which objects trigger an alert, for example person, bicycle, car etc.

- a cooldown can be set so that only one alert is sent per event

- If the software sents an alert the cooldown time starts (p.e. 3 min). If more objects are detected during the following 3 minutes, the cooldown is resetted. During this time, no further alerts will be triggered. If nothing is detected during the cooldown time, the specific camera will be "rearmed".

- view statistics for every camera

- camera-specific profiles

- mask image areas where nothing should be detected and set detection confidence limits

So how does the AI detection with Blue Iris work in general:

If Blue Iris detects an alert on a camera (motion detection or external input), a still picture is stored in the input folder of the AI Software. Every image file name starts with the cameras short name (in Blue Iris), using which AI Tool associates the image with a matching camera profile (the camera profile contains a set of rules on what to detect and what to do if something was detected by this camera). Then the image is analyzed and in case a relevant object was detected, the actions configured in the camera profile are executed, p.e. triggering the camera or giving the Blue Iris alert clip a flag.

Blue Iris only creates a snapshot (that AI Tool can run AI analysis on) if the camera was triggered, so we basically have to option on how to filter out the false alerts:

A) We create a invisible duplicate of the original camera in Blue Iris and only trigger the original camera if an alert in the duplicate cams was confirmed by AI Tool. (standard procedure v1.65 and below)

B) Or we configure AI Tool to confirm alerts by flagging them in Blue Iris and tell Blue Iris to only present us flagged alerts. (recommended procedure since v1.67)

So the principle procedure is:

1. motion detection triggers a camera and a snapshot is saved into the 'Input Path'

2. AI Tool associates the image with a configured camera profile and sends the image to the locally installed DeepQuestAI instance to analyze the image.

3. DeepQuestAI analyzes the images and returns the results to the AI Tool

4. If the image contains one of the 'relevant objects' specified in the cameras profile (in AI Tool), the actions specified for this camera are executed (flag alert in Blue Iris, send image to Telegram).

Blue Iris only creates a snapshot (that AI Tool can run AI analysis on) if the camera was triggered, so we basically have to option on how to filter out the false alerts:

A) We create a invisible duplicate of the original camera in Blue Iris and only trigger the original camera if an alert in the duplicate cams was confirmed by AI Tool. (standard procedure v1.65 and below)

B) Or we configure AI Tool to confirm alerts by flagging them in Blue Iris and tell Blue Iris to only present us flagged alerts. (recommended procedure since v1.67)

So the principle procedure is:

1. motion detection triggers a camera and a snapshot is saved into the 'Input Path'

2. AI Tool associates the image with a configured camera profile and sends the image to the locally installed DeepQuestAI instance to analyze the image.

3. DeepQuestAI analyzes the images and returns the results to the AI Tool

4. If the image contains one of the 'relevant objects' specified in the cameras profile (in AI Tool), the actions specified for this camera are executed (flag alert in Blue Iris, send image to Telegram).

Interface Overview (Screenshots):

Overview Tab

Shows the current state (Processing/Running), software version and informs in case an error occured. Clicking on the red error message will then open the Log file.

If you use the Telegram feature, you have to expect about 1 error per week which is caused by the Telegram upload bug. Telegram blocks the upload of the image, the AI Software detects that, informs you and stores the image that could not be uploaded in [Software Main Directory]/errors/.

Stats TabThe Stats tab shows you statistics for a selected camera or for all cameras.

The 'Input Rates' statistic contains per-camera information on how many of the images that were checked contained a) no objects ('False Alerts'), b) irrelevant objects ('irrelevant Alerts') or c) relevant objects that finally caused an alert ('Alerts').

The Timeline shows how many relevant, irrelevant and false alerts were processed during time periods of 30 minutes over the day. currently the timeline shows all detections of all days stored in the database, so it's not a per day chart. An option to select days or time windows is planned.

Both stats are meant to help configuring the sensitivity of BI's motion detection and compare the effectiveness of different configurations and technologies (software motion detection vs. PIR sensors). If you have a separate day and night profile in BI, the Timeline might help you adapt the profile settings to lower unnecessary load.

The chart "Frequency of alert result confidences shows how often Aitool is how confident about relevant detections (green) and irrelevant detections (orange). This might help you setting a confidence threshold that cuts out false positives and does not hide relevant alerts from you.

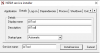

History TabShows alert images that recently passed analysis together with the time and date the still was taken, the camera it was associated with and whether (✓) or not (X) relevant objects were detected in it.

For convenient browsing, the left list can show the exact objects that were detected if the AI Tool Window is larger (i.e. maximized).

Using the filter options it's possible to only show the alerts for one camera and only show relevant or irrelevant alerts.

Enabling 'Show Objects' will mark all relevant detected objects in the image with red rectangles and 'Show Mask' will additionally show the areas of the selected camera where detections will not cause an alert.

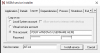

Cameras TabHere you can give every camera its own profile, specifying which objects cause an alert, which actions should be executed when one of these objects is detected on this specific camera and the individual camera cooldown time. Furthermore, you can set a confidence range, so that detections with a lower or higher confidence do not cause an alert.

Settings TabHere you tell the software where Blue Iris saves the alert images ('Input Path') and the DeepStack URL ("localhost:81").

In case you want to use the Telegram feature, you input the Telegram credentials here aswell.

If you experience errors, you can enable 'Log everything' for troubleshooting purposes. The AI Software will log the most of what it does into the Log.txt file you can find in the Software Main Directory (where 'aitool.exe' is stored).

Setup:

The setup seems to be quite complicated, but actually it's quite simple and I'm just writing alot that should help you understand how the software works.

Overview:

1. Install DeepQuestAI

2. Configure Blue Iris

3. Configure the AI Software according to your setup and requirements

optional: Send alert images to Telegram

optional: Create a detection mask

optional: Run AI Tool as a Windows Service

While setting up everything, I'd like to ask you to mind AI Tool's only Term of Use:

use AI Tool only on your own property, don't use it to analyze the premises of your neighbors or public areas.

The key difference between this setup guide and the setup guide for version 1.65 and below is, that now AI Tool confirms alerts by flagging them whilst the previous setup required duplicate cameras (one motion detection camera and one camera that would only be triggered if an alert was confirmed by AI Tool).

1. Install DeepQuestAI

You can eigther run a Windows version with graphical user interface, or a more up to date Docker version.

DeepQuestAI for Windows:

(no longer requires activation)

Now the actual software that analyzes the images is already running.

1. Install DeepQuestAI

You can eigther run a Windows version with graphical user interface, or a more up to date Docker version.

DeepQuestAI for Windows:

1.1 Although free, DeepQuestAI needs an API key, so we have to register an account. Create an account at Sign Up, choose the free plan (that is sufficient for our use), go to the portal (Dashboard), click 'Install DeepStack', select 'Windows' and download the installer. While downloading, you can return to the dashboard and copy your API key. Notice that on the Dashboard it says 'Expires: Unlimited'.

1.2 As soon as DeepQuestAI is installed, start DeepQuestAI(from now on I'll call it DQAI  ) , hit 'Start Server', input your API-Key, select the 'Detection API' and change the Port from 80 (Blue Iris needs this Port for UI3) to p.e. 81. Finally click 'Start Now'.

) , hit 'Start Server', input your API-Key, select the 'Detection API' and change the Port from 80 (Blue Iris needs this Port for UI3) to p.e. 81. Finally click 'Start Now'.

1.2 You will merely need it, but the web interface of DQAI is now accessible by opening "localhost:81" with your web browser. Other devices on your network can access the interface using your Blue Iris IP and Port 81, so p.e. "192.168.178.2:81". Notice that the web interface now gives an expiry date (2 years). I don't know if the API expires or or not, but getting a new API key every 2 years isn't a great problem imho.

DeepquestAI for Docker:(no longer requires activation)

1.1 Download Deepstack using Docker Desktop or command line: docker pull deepquestai/deepstack:cpu-x3-beta

or docker pull deepquestai/deepstack:gpu-x3-beta if you have a nvidia gpu installed (much faster).

1.2 Setup the container.

docker run --restart=always -e VISION-DETECTION=True -v localstorage:/datastore -p 81:5000 --name deepstack deepquestai/deepstack:cpu-x3-beta for CPU

docker run --restart=always -e VISION-DETECTION=True -v localstorage:/datastore -p 81:5000 --name deepstack deepquestai/deepstack:gpu-x3-beta for GPU

"-e MODE=Low" is optional (Default is medium). It's not as accurate but gives a faster result.

"--restart=always" will keep it running if it stops or if the PC is restarted.

In the above example it runs on port 81.

Now the actual software that analyzes the images is already running.

Now you can chose between two types of configuration. The recommended setup will receive motion detection alerts from a camera in Blue Iris and give them a flag in Blue Iris, if something was detected using AI.

The alternative (older) method will include setting up a duplicate camera for every camera you want to apply AI on. For each physical camera, you will have one hidden camera in BI that only detects motion and sends alert images to AI Tool, and a visible one, that get's triggered if the alert contains p.e. a human and therefore is confirmed by AI.

2. Configure Blue Iris

! Create a backup of your Blue Iris configuration first. Once the AI is running you certainly won't want to revert what you did here, but in case nothing works afterwards, just going back to a backup config is a very convenient troubleshooter.

2.1 Create 'Input Path' folder

We need to create a folder where BI can store snapshots if motion occurs, so that AI Tool can analyze them. We add this path to Blue Iris by opening the settings of Blue Iris, then 'Clips and archiving', then click on one of aux folders in the list on the left (if you click on p.e. 'Aux_7' and don't move the cursor for 1-2s, you will be able to change the displayed name) and then create a new folder in the Blue Iris main directory. We can name this folder for example "aiinput". We can furthermore limit the folder size to p.e. 5Gb or set a time limit, so that old images are automatically removed.

2.2 Enable URL flagging

To enable URL actions like flagging an alert, we have to do the following in Blue Iris:

1. go to Setting->Webserver->Advanced and disable 'use secure session keys and login page'.

2. go to Settings->Users and eighter select a user and copy the password, or create a new administrator user. The credentials will be needed in step 3.4.5 to make the flagging URL.

2.3 Configure each camera

This step needs to done for every camera and every profile.

Go to Record, check 'JPEG snapshot each (mm:ss)', select the „aiinput“ folder from step 2.1, check the box 'Only when triggered' and set the interval to p.e. 0:04.0 (one image every 4 second).

Now go to 'Trigger', check 'Capture an alert list image' and set the Break time 'End trigger unless retriggered' to p.e. 3s, so that a short alert only causes one image to analyze. If you think that AI Tool might overlook an object "on first sight" because it's only party visible, you can also make the break time longer than the 4s interval. In this case, multiple images will be analyze by AI Tool.

Finally, go to Alerts and uncheck all motion zones. Makes sure that external is checked.

3. Configure AI Tool

Setup and configure the AI Software

3.1 Download the latest version (v1.67 and up) of the attached program, unzip it where you want and start 'aitool.exe'.

3.2 Go to the 'Settings' tab, add the 'Input Path' we created in step 2.1 and ensure that the 'Deepstack URL' is correct. Hit 'Save'.

Configure the individual camera profiles

AI Tool already contains a default profile which will be applied to an inputted image if no specific profile was defined for the alerting camera. That is useful, but we will want to give every camera its own profile, so that the specific camera can be triggered in Blue Iris etc. .

3.4 The following steps must be repeated for every camera and will be conducted using the example cam 'frontyard'. It is recommended to use the short names from Blue Iris in AI Tool too.

.1 Open the 'Cameras' tab, click 'Add Camera', name the camera like the short name in Blue Iris "frontyard" and hit ENTER.

.2 Blue Iris will store the alert images under names that start with the cameras short name, so p.e. 'frontyard.20200326_054241.0.64.jpg'. As we store the alert images of multiple cameras in one input folder, we must filter each cameras images out by setting the option 'Input file begins with:' to the name of our camera, in this case 'frontyard'. This should usually already be filled by AI Tool automatically.

.3 Check all objects that you want to trigger an alert on this camera, p.e. 'Person' and 'Car'.

.4 Input a 'Cooldown Time', you could try 3 minutes. 0 minutes means that the cooldown feature is disabled on this camera. During the first half of the cooldown (after a detection) no images will be analyzed to save on CPU.

.5 It is not recommended to limit the confidence limits at the beginning. Rather it's recommended to adjust the limits if you repeatedly receive false positive alerts with p.e. a low confidence of around 40-60% while your correct alerts usually have confidence of 80-100%.

.6 The 'Trigger URL(s)' needs to be filled with these two URLs to trigger and then flag a specific alert in Blue Iris.

Take the two following url templates, replace {user} and {password} and add both separated by comma to AI Tool:

Every URL should start with 'http://'.

Meanwhile, the trigger URL is not limited to Blue Iris, practically everything can be triggered that has such an URL that one can call for a trigger (p.e. home automation). If you also want to call other urls if something was detected, you can add them separated by comma (or just press SPACE).

If you filled in everything, copy/paste the whole url into your webbrowser and make sure it causes an alert on the camera 'frontyard'. Finally, input the URL into the 'Trigger URL(s)' field.

.6 Hit 'Save'.

Leave 'Send alert images to Telegram' unchecked for now, you can activate it later.

! Create a backup of your Blue Iris configuration first. Once the AI is running you certainly won't want to revert what you did here, but in case nothing works afterwards, just going back to a backup config is a very convenient troubleshooter.

2.1 Create 'Input Path' folder

We need to create a folder where BI can store snapshots if motion occurs, so that AI Tool can analyze them. We add this path to Blue Iris by opening the settings of Blue Iris, then 'Clips and archiving', then click on one of aux folders in the list on the left (if you click on p.e. 'Aux_7' and don't move the cursor for 1-2s, you will be able to change the displayed name) and then create a new folder in the Blue Iris main directory. We can name this folder for example "aiinput". We can furthermore limit the folder size to p.e. 5Gb or set a time limit, so that old images are automatically removed.

2.2 Enable URL flagging

To enable URL actions like flagging an alert, we have to do the following in Blue Iris:

1. go to Setting->Webserver->Advanced and disable 'use secure session keys and login page'.

2. go to Settings->Users and eighter select a user and copy the password, or create a new administrator user. The credentials will be needed in step 3.4.5 to make the flagging URL.

2.3 Configure each camera

This step needs to done for every camera and every profile.

Go to Record, check 'JPEG snapshot each (mm:ss)', select the „aiinput“ folder from step 2.1, check the box 'Only when triggered' and set the interval to p.e. 0:04.0 (one image every 4 second).

Now go to 'Trigger', check 'Capture an alert list image' and set the Break time 'End trigger unless retriggered' to p.e. 3s, so that a short alert only causes one image to analyze. If you think that AI Tool might overlook an object "on first sight" because it's only party visible, you can also make the break time longer than the 4s interval. In this case, multiple images will be analyze by AI Tool.

Finally, go to Alerts and uncheck all motion zones. Makes sure that external is checked.

3. Configure AI Tool

Setup and configure the AI Software

3.1 Download the latest version (v1.67 and up) of the attached program, unzip it where you want and start 'aitool.exe'.

3.2 Go to the 'Settings' tab, add the 'Input Path' we created in step 2.1 and ensure that the 'Deepstack URL' is correct. Hit 'Save'.

Configure the individual camera profiles

AI Tool already contains a default profile which will be applied to an inputted image if no specific profile was defined for the alerting camera. That is useful, but we will want to give every camera its own profile, so that the specific camera can be triggered in Blue Iris etc. .

3.4 The following steps must be repeated for every camera and will be conducted using the example cam 'frontyard'. It is recommended to use the short names from Blue Iris in AI Tool too.

.1 Open the 'Cameras' tab, click 'Add Camera', name the camera like the short name in Blue Iris "frontyard" and hit ENTER.

.2 Blue Iris will store the alert images under names that start with the cameras short name, so p.e. 'frontyard.20200326_054241.0.64.jpg'. As we store the alert images of multiple cameras in one input folder, we must filter each cameras images out by setting the option 'Input file begins with:' to the name of our camera, in this case 'frontyard'. This should usually already be filled by AI Tool automatically.

.3 Check all objects that you want to trigger an alert on this camera, p.e. 'Person' and 'Car'.

.4 Input a 'Cooldown Time', you could try 3 minutes. 0 minutes means that the cooldown feature is disabled on this camera. During the first half of the cooldown (after a detection) no images will be analyzed to save on CPU.

.5 It is not recommended to limit the confidence limits at the beginning. Rather it's recommended to adjust the limits if you repeatedly receive false positive alerts with p.e. a low confidence of around 40-60% while your correct alerts usually have confidence of 80-100%.

.6 The 'Trigger URL(s)' needs to be filled with these two URLs to trigger and then flag a specific alert in Blue Iris.

Take the two following url templates, replace {user} and {password} and add both separated by comma to AI Tool:

http://localhost:80/admin?camera=[camera]&trigger&user={user}&pw={passwort}http://localhost:80/admin?camera=[camera]&flagalert=1&trigger&memo=[summary]&user={user}&pw={passwort}Every URL should start with 'http://'.

Meanwhile, the trigger URL is not limited to Blue Iris, practically everything can be triggered that has such an URL that one can call for a trigger (p.e. home automation). If you also want to call other urls if something was detected, you can add them separated by comma (or just press SPACE).

If you filled in everything, copy/paste the whole url into your webbrowser and make sure it causes an alert on the camera 'frontyard'. Finally, input the URL into the 'Trigger URL(s)' field.

.6 Hit 'Save'.

Leave 'Send alert images to Telegram' unchecked for now, you can activate it later.

Blue Iris only creates an alert images that can be analyzed when the camera is triggered, so we have to duplicate the camera we want to monitor using AI to ensure that only if the AI actually detected something, an alert is caused. Therefore we create a camera duplicate in Blue Iris that acts as the AI input and is configured to be quite sensitive to catch possible movements. The stills coming from this camera then are analyzed using the AI Software and only if something relevant is detected, the actual camera is triggered. This camera - of course - should not be triggered by motion detection but only by the AI Software.

This might sound a bit difficult, but as Blue Iris is a very sophisticated piece of software, the camera duplicates don't even need additional resources. Chapeau!

2. Configure Blue Iris

! Create a backup of your Blue Iris configuration first. Once the AI is running you certainly won't want to revert what you did here, but in case nothing works afterwards, just going back to a backup config is a very convenient troubleshooter.

I anticipate you are familiar with Blue Iris, so I keep the description simple. You have to do the steps 2.3 - 2.6 for every camera that you want to be analyzed by AI Software.

So now you should have every camera twice, one that inputs potential alerts and the second one that is only triggered if there is actually something detected.

3. Configure the program according to your setup and requirements

This might sound a bit difficult, but as Blue Iris is a very sophisticated piece of software, the camera duplicates don't even need additional resources. Chapeau!

2. Configure Blue Iris

! Create a backup of your Blue Iris configuration first. Once the AI is running you certainly won't want to revert what you did here, but in case nothing works afterwards, just going back to a backup config is a very convenient troubleshooter.

I anticipate you are familiar with Blue Iris, so I keep the description simple. You have to do the steps 2.3 - 2.6 for every camera that you want to be analyzed by AI Software.

2.1 Create 'Input Path' folder

We need an directory where BI stores all the images possibly containing alerts. We can already add this path to Blue Iris by opening the settings of Blue Iris, then 'Clips and archiving', then click on one of aux folders in the list on the left (if you click on p.e. 'Aux_7' and don't move the cursor for 1-2s, you will be able to change the displayed name) and then create a new folder in the Blue Iris main directory. We can name this folder for example "aiinput". We can furthermore limit the folder size to p.e. 5Gb, so that old images are automatically removed.

2.2 Enable URL triggering feature in Blue Iris

URL triggering is disabled by default, so to be able to trigger a camera in Blue Iris via URL, you have to do the following in Blue Iris:

1. go to Setting->Webserver->Advanced and disable 'use secure session keys and login page'.

2. go to Settings->Users and eighter select a user and copy the password, or create a new administrator user. The credentials will be needed in step 3.4.5 to make the trigger URL.

2.3 Duplicate camera

Now we have to create a camera duplicate whose only purpose is to save an image when a motion is detected, so that the AI Software can analyze it. So add a new camera, give it a name that makes sense (e.g. if your original camera was called 'frontyard', call it 'aifrontyard'), and under type select 'copy from another camera' and choose the appropriate one.

2.4 Disable unneccessary stuff in the duplicated camera

Keep in mind that this cameras only job is to detect motion and then save a still image into the folder we created in 2.1, so disable all features on this camera that are not needed (recording, pretrigger, etc). Because Blue Iris is already prepared to work with camera clones it is not neccessary to lower the resolution to save on CPU resources. Quite the opposite: If the camera stream url isn't changed, there will be zero additional CPU usage. Instead, changing the stream url to p.e. a profile with a lower resolution will cause additional CPU load.

Additionally you can go to the 'General' tab and check 'Hidden', which will hide this duplicate camera from the Blue Iris UI3 (otherwise you suddently have twice as many cameras as before). This is really useful, as it keeps your Live View page tidy.

2.5 Store alert images in 'Input Path'

now go to Record, check 'JPEG snapshot each (mm:ss)', select the folder you created in step 2.1, check the box 'Only when triggered' and set the interval to p.e. 0:05.0 (one image every 5 second). Furthermore, you might want to disable 'Create Alert list images when triggered', because otherwise alot of false-alarm images (remember we set the motion detection to be very sensitive) will be stored in your alerts folder.

Now go to 'Trigger', check 'Capture an alert list image' and set the Break time 'End trigger unless retriggered' to p.e. 4s, so that a short alert only causes one image to analyze. If you think that the AI Software might overlook an object "on first sight" because it's only party visible (which most times is no problem at all for the AI Software), you can also make the break time longer than the 5s interval. In this case, multiple images will be analyze by the AI Software.

2.6 Disable motion detection for original camera

Finally, we have to disable motion detection and other triggers on the original camera ('frontyard'), so that nothing except the AI Software triggers the original camera. To do that we open the camera settings of our original camera, go to 'Trigger' and uncheck all boxes in the the 'Sources' area.

As the new AI Software continously runs in the system tray, it is no longer neccessary to call the program every time a new image is created, so in case you already used previous versions of the AI Software, you should disable 'run a program or execute a script' in the 'Alerts' tab.

Furthermore, in case you are working with multiple profiles, ensure to apply all changes to all profiles.So now you should have every camera twice, one that inputs potential alerts and the second one that is only triggered if there is actually something detected.

3. Configure the program according to your setup and requirements

Setup and configure the AI Software

3.1 Download the latest version of the attached program, unzip it where you want and start 'aitool.exe'.

3.2 Go to the 'Settings' tab, add the 'Input Path' we created in step 2.1 and ensure that the 'Deepstack URL' is correct. Hit 'Save'.

3.3 The AI Software already contains a default profile and as long as no other profile matches an inputted image, whatever is specified in the Default profile will happen. That is useful, but we will want to give every camera its own profile, so that the specific camera can be triggered in Blue Iris etc. .

Configure the individual camera profiles

3.4 The following steps must be repeated for every camera and will be conducted using the example cam 'frontyard', of which we got the inputting camera 'aifrontyard' after following the steps 2.1 to 2.6. So the alert image names start with 'aifrontyard' and the camera 'frontyard' is the actual camera that we use to record and watch via UI3.

.1 Open the 'Cameras' tab, click 'Add Camera', name the camera "frontyard" and hit ENTER.

.2 Blue Iris will store the alert images under names that start with the camera name, so p.e. 'aifrontyard.20180326_054241.0.64.jpg'. As we store the alert images of multiple cameras in one input folder, we must filter the images from the duplicated camera out by setting the option 'Input file begins with:' to the name of our duplicated camera, in this case 'aifrontyard'. Now select the created entry 'frontyard' and type "aifrontyard" into the field 'input file begins with' to ensure that all images from the camera 'aifrontyard' are allocated to this profile.

.3 Check all objects that you want to trigger an alert on this camera, p.e. 'Person' and 'Car'.

.4 Input a 'Cooldown Time', you could try 3 minutes. 0 minutes means that the cooldown feature is disabled on this camera.

.5 It is not recommended to limit the confidence limits at the beginning. Rather it's recommended to adjust the limits if you repeatedly receive false positive alerts with p.e. a low confidence of around 30% while your correct alerts usually have confidence of 80-100%. Then you could set a confidence threshold at maybe 40% (lower limit).

.6 If we don't specify one or multiple 'Trigger URL(s)', no trigger call will be made. If we want to call multiple urls, we have to seperate the URLs with commas. Every URL should start with ''. Meanwhile, the trigger URL is not limited to Blue Iris, practically everything can be triggered that has such an URL that one can call for a trigger (p.e. home automation).

Take the following url template and replace user, password (both from step 2.2) and the short cam name with yours:

http://localhost:80/admin?trigger&camera=[short cam name]&user=[user]&pw=[password]

In our example with the admin account name "admin" and the password "todsicher":

http://localhost:80/admin?trigger&camera=frontyard&user=admin&pw=todsicher

If you filled in everything, copy/paste the whole url into your webbrowser and make sure it causes an alert on the camera 'frontyard'. Finally, input the URL into the 'Trigger URL(s)' field.

.6 Hit 'Save'.

Leave 'Send alert images to Telegram' unchecked for now, you can activate it later.

The program can send alert images (and error information) to your Telegram account using a Telegram bot. The program needs two strings to connect to Telegram, 1. the Telegram Bot Token and 2. the chat-id of the chat between you and the bot:

If you want to send alert images to multiple Telegram accounts (yours, your partners, etc), you need to repeat step 4.2 for every account, copy the chat-ids of all chat objects in step 4.3 and input the chat-ids in step 4.4 interposed by a comma ("12345678, 87654321"). Or alternatively, you can simply add the bot to a group (p.e. your familys Telegram group) which should receive the alert images.

4.1 Create a bot and get the Bot Token:

4.1.1 Contact BotFather on Telegram.

4.1.2 Use the /newbot command to create a new bot. The BotFather will ask you for a name and username to generate the Bot Token along the lines of

110201543:AAHdqTcvCH1vGWJxfSeofSAs0K5PALDsaw for your bot.4.2 Now contact the bot you created with the telegram account you want to receive the notifications on.

4.3 Retrieve the chat-id:

4.3.1 replace [Bot Token] with your token from step 4.1 and then open

https://api.telegram.org/bot[Bot Token]/getUpdates4.3.2 Look for the "chat" object and copy the chat id:

4.4 open the AI Software, head over to 'Settings', input 'Telegram Token' and 'Telegram Chat ID' and hit 'Save'.

4.5 Enable the option 'Send alert images to Telegram' for all cameras of which you want to send alert images using Telegram.

Multiple recipients:If you want to send alert images to multiple Telegram accounts (yours, your partners, etc), you need to repeat step 4.2 for every account, copy the chat-ids of all chat objects in step 4.3 and input the chat-ids in step 4.4 interposed by a comma ("12345678, 87654321"). Or alternatively, you can simply add the bot to a group (p.e. your familys Telegram group) which should receive the alert images.

You can define a detection mask for every camera to keep possible detections in these masked areas from causing alerts. This is very useful and an excellent solution if the AI detection keeps finding false objects in one area. Furthermore, if it's impossible that something will every be detected in a certain area (p.e. the sky), masking this area will additionally prevent false detections there.

The privacy mask currently must be created using an external paint program, p.e. Paint.Net.

The mask file needs to have the exact same resolution as the camera image that are inputted into the Input folder. The mask must be stored as a .png file in the sub directory ./cameras/ (where the camera profile files are stored aswell).

The mask image needs to have the same name as the profile file for the selected camera. So if the profile file is 'Garage indoor.txt', the mask file for this camera needs to be called 'Garage indoor.png'.

All areas in the image that have a opacity of 10 or more are masked (where 255 is 100% solid), so you can paint with an opacity of p.e. 150 so that you later on still can see through masked areas of the overlay in the history tab of AI Tool. You can select a color of your choice, each one will work.

Using Paint.Net, the following is very convenient:

In very rare cases BI for some reasons alters the image resolution, which then causes an error message because the mask image and the analyzed image have different dimensions. If that happens, you can open BI and go into the 'Video' tab of the ai input camera with the problem. There you enable 'Anamorphic (force size)' and set the stream dimensions correctly.

The privacy mask currently must be created using an external paint program, p.e. Paint.Net.

The mask file needs to have the exact same resolution as the camera image that are inputted into the Input folder. The mask must be stored as a .png file in the sub directory ./cameras/ (where the camera profile files are stored aswell).

The mask image needs to have the same name as the profile file for the selected camera. So if the profile file is 'Garage indoor.txt', the mask file for this camera needs to be called 'Garage indoor.png'.

All areas in the image that have a opacity of 10 or more are masked (where 255 is 100% solid), so you can paint with an opacity of p.e. 150 so that you later on still can see through masked areas of the overlay in the history tab of AI Tool. You can select a color of your choice, each one will work.

Using Paint.Net, the following is very convenient:

1. load a still of the selected camera (p.e. from the Input folder)

2. add a second layer and paint the mask in the 2nd layer

3. then remove the first layer containing the camera image

4. save the image using the correct name as a .png into ./cameras/

HintIn very rare cases BI for some reasons alters the image resolution, which then causes an error message because the mask image and the analyzed image have different dimensions. If that happens, you can open BI and go into the 'Video' tab of the ai input camera with the problem. There you enable 'Anamorphic (force size)' and set the stream dimensions correctly.

For AITool to be able to run as Windows service a third-party program is required – NSSM (or Non-Sucking Service Manager).

As the DQAI Windows version doesn't support autostart yet, the DQAI Docker version is required for the following (otherwise AI Tool will be running as a service, but DQAI won't be started). You can find the install guide for DQAI Docker in the 'deprecated CMD Version (v0.1 - v0.6)' spoiler.

Please follow these steps:

As the DQAI Windows version doesn't support autostart yet, the DQAI Docker version is required for the following (otherwise AI Tool will be running as a service, but DQAI won't be started). You can find the install guide for DQAI Docker in the 'deprecated CMD Version (v0.1 - v0.6)' spoiler.

Please follow these steps:

1. Download NSSM from here: Direct Download or open Download Page

2. Extract it to a folder on your hard drive

3. Open an administrative command prompt

3.1 Win 10: press the Search button, Win7: open the Start menu

3.2 Type in cmd

3.3 Right click on Command Prompt and select Run as administrator

3.4 Click Yes on the prompt

4. Within the CMD navigate to where you have extracted NSSM (eg. cd / press enter, cd nssm-2.24-101-g897c7ad press enter, cd win32 press enter)

5. In CMD now type nssm.exe install AITool and press enter

6. You will be presented with the NSSM GUI. You need to:

6.1 Browse to the AITool path and double click on the AITool.exe

6.2 Ensure the startup directory is auto-filled with the path to the AITool.exe folder

6.3 Ensure the Service name is correct

6.4 Click on Details and fill out Display name and description (for example AITool in both)

6.5 Click on Log On and select This account and enter your Windows username and password (password needs to be entered twice in the correct boxes)

6.6 Press Install service. If you get a success press OK an reboot your Windows PC.

7. After reboot check services and ensure the AITool service is running

8. Without manually running AITool, generate some valid alerts and ensure they are being sent to your mobile/tablet device.

Many thanks to MnM for testing and describing this solution!

This will keep all the camera profiles and the software settings:

- Close the old AI Tool.

- Delete everything in the software main folder (where the old aitool.exe is located), except the /cameras subfolder.

- Open the zip containing the new version.

- Extract everything except the /cameras subfolder into the software main folder.

Problem: AI tool does not reach Deepstack

1. Confirm that Deepstack is

2. Windows version: Check if Deepstack is silently crashed

2. Docker version: Deepstack container might have crashed

1. Confirm that Deepstack is

- installed

- started with the Detection API selected

- on the correct port and not not the same port Blue Iris or any other service on this pc uses

- activated

- can be reached by typing in the Deepstack URL from AI Tool settings into a web browser.

2. Windows version: Check if Deepstack is silently crashed

Oftentimes you can't see that anywhere in the user interface of Deepstack. Open the Task manager and check if there are multiple processes python.exe which alltogether require around 1GB of RAM. If that's not the case, Deepstack is silently crashed. Now you can

- Have a look at the Windows event viewer check why python crashed (p.e. AVX isn't supported)

- install the Docker version of Deepstack instead of the Windows application

Check the Docker log to find out if the container crashed and why. Common reasons are:

- Hyper-X is disabled (eigther in BIOS or - in case you're using that - in the virtual machine)

- AVX isn't supported by your system: download the Docker container with parameter -noavx

I hope that the AI Software works fine for you.

deprecated CMD Version (v0.1 - v0.6)

Screenshot of the program running and the output folder in the background:

Key features:

Who is this for:

Everyone who

Everyone who

So how does the AI detection with BlueIris work in general:

The software can only analyze .jpg images, so we have to get such an image everytime BlueIris thinks there might be a motion. Blue Iris actually has this feature integrated (it is used to work with the Sentry AI), but it is not accessible by programs like mine, so we have to find another way.

We simply duplicate the camera in Blue Iris. In the camera duplicate, we disable recording and all other CPU-heavy features (Optimizing Blue Iris's CPU Usage | IP Cam Talk) and then we configure the motion detection to be quite sensitive. Furthermore, we configure that every time a motion is detected, an image should be taken and the AI analysis program should be started.

The program then analyzes the image taken and, in case a person (or whatever we configured to cause an alert) is found in it, the program will call the Blue Iris alert URL for the original camera, so this camera (not the duplicate we created) is triggered. Because the AI software needs some time (per image: a splitsecond with a decent NVidia gpu and 5-15s without a gpu), we have to set the pre-trigger buffer of the original camera to the delay time.

Setup:

The setup seems to be very complicated, but actually it's quite simple and I'm just writing alot that should help you understand how the software works.

Overview:

1. Install Docker and install and start DeepQuestAI container in Docker

2. Configure the program according to your setup and requirements

3. Configure Blue Iris

optional: Send alert images to Telegram

Key features:

- analyze images stored in an input folder using DeepQuestAI for certain object *and humans

- call one or multiple urls if a triggering object is found (optional although this actually is the main functionality)

- it can be configured which objects trigger an alert, for example person, bicycle, car etc.

- save cutouts containing the detected objects in an output folder (optional)

- objects that trigger an alert are saved in the output folder

- objects that do not trigger an alert but where detected anyways are saved in a subfolder '/other objects/' of the output folder

- send alert images to Telegram using a bot (optional)

- only analyze images in the input folder that start with a certain string

- blue iris alert images natively start with the camera name, so using this feature the program only analyzes images from a specific camera

- delay the analysis start by x seconds and run another analysis if, during the previous analysis, new images were saved in the input folder

- delete images that were analyzed

Who is this for:

Everyone who

a) has qualms about sending private CCTV data to third parties and

b) does not want to pay a monthly fee (because this AI solution presented here is completely free) and

c) maybe even has a NVidia gpu that speeds up AI analysis from multiple seconds without a gpu to splitseconds.

For whom is this not neccessarily for:Everyone who

a) does not have enough CPU performance left (Docker running the AI Software is demanding) and

b) needs high reliability and stability. This is the first larger program I've written, so you might have to expect unexpected behavior and sudden crashes.

and sudden crashes.

So how does the AI detection with BlueIris work in general:

The software can only analyze .jpg images, so we have to get such an image everytime BlueIris thinks there might be a motion. Blue Iris actually has this feature integrated (it is used to work with the Sentry AI), but it is not accessible by programs like mine, so we have to find another way.

We simply duplicate the camera in Blue Iris. In the camera duplicate, we disable recording and all other CPU-heavy features (Optimizing Blue Iris's CPU Usage | IP Cam Talk) and then we configure the motion detection to be quite sensitive. Furthermore, we configure that every time a motion is detected, an image should be taken and the AI analysis program should be started.

The program then analyzes the image taken and, in case a person (or whatever we configured to cause an alert) is found in it, the program will call the Blue Iris alert URL for the original camera, so this camera (not the duplicate we created) is triggered. Because the AI software needs some time (per image: a splitsecond with a decent NVidia gpu and 5-15s without a gpu), we have to set the pre-trigger buffer of the original camera to the delay time.

Setup:

The setup seems to be very complicated, but actually it's quite simple and I'm just writing alot that should help you understand how the software works.

Overview:

1. Install Docker and install and start DeepQuestAI container in Docker

2. Configure the program according to your setup and requirements

3. Configure Blue Iris

optional: Send alert images to Telegram

1. Install Docker and install and start DeepQuestAI container in Docker

Docker can be installed on Linux, macOS and Windows. As BI-users most likely will have a Windows PC running already, I will describe the setup process for Windows 7 (because imho someone who runs a Win10 pc containing all sensitive CCTV files probably does not care that much about privacy #nooffense). If you want to use your NVidia gpu, as far as I'm concerned, you need to run docker on linux. Here is a guide on how to use DeepQuestAI with the gpu: Using DeepStack with NVIDIA GPU — DeepStack 0.1 documentation .

will have a Windows PC running already, I will describe the setup process for Windows 7 (because imho someone who runs a Win10 pc containing all sensitive CCTV files probably does not care that much about privacy #nooffense). If you want to use your NVidia gpu, as far as I'm concerned, you need to run docker on linux. Here is a guide on how to use DeepQuestAI with the gpu: Using DeepStack with NVIDIA GPU — DeepStack 0.1 documentation .

2. Configure the program according to your setup and requirements

Please read through the explanations and modify all parameters according to your needs and save the file. When setting up your configuration, please mind the following information:

A configuration might, for example, look like this:

3. Configure Blue Iris

I anticipate you are familiar with Blue Iris, so I keep the description simple.

).

).

If you are still to lazy to go out, you can take any jpg picture containing an object that you configured to trigger an alert, put it into the input folder and put the name of the camera (p.e. 'aifrontyard') in front of the image name. And then manually trigger the camera.

If everything works fine then you now have lowered your false detection rate significantly while enhancing the rate of correct detections.

Docker can be installed on Linux, macOS and Windows. As BI-users most likely

1.1 First we want to download an install Docker Toolbox: Docker Toolbox overview

1.2 Install DeepQuestAI in Docker: open the Docker Quickstart Terminal (shortcut on the desktop) and enter 'docker pull deepquestai/deepstack' as soon as docker finish setting up everything. The installation of DeepQuestAI takes some time aswell, meanwhile you can proceed with step 1.3

1.3 Although free, DeepQuestAI needs an API key, so we have to register an account (email not needed). Create an account at Sign Up, choose the free plan (that is sufficient for our use), go to the portal (Dashboard) and copy your API key. Notice that on the Dashboard it says 'Expires: Unlimited'.

1.4 As soon as DeepQuestAI is installed, start DeepQuestAI(from now on I'll call it DQAI  ) using the command 'docker run --restart=always -e VISION-DETECTION=True -v localstorage:/datastore -p 80:5000 deepquestai/deepstack'

) using the command 'docker run --restart=always -e VISION-DETECTION=True -v localstorage:/datastore -p 80:5000 deepquestai/deepstack'

1.5 Docker natively uses a strange ip address 192.168.99.100, so open this address with you webbrowser and you'll see the DQAI interface, now input the API key and activate it. Notice that now, an expiry date is give (in 2 years). I don't know if the API expires or or not, but getting a new API key every 2 years isn't a great problem imho.

Now the actual software that analyzes the images is already running as a webservice, so if Docker hadn't set it up with this unusual IP address,we would be able to access DQAI not only from the server it is running, but from every computer in the network.2. Configure the program according to your setup and requirements

2.1 Download the attached program and unzip it. The program is designed to analyze images from one camera (or at least to call only one trigger url [of one camera]), but you can run multiple programs, one for every camera, if you wish.

2.2 open the config.txt using Notepad++ or some other text editor that does not drive you crazy  . Now what you see looks like the following:

. Now what you see looks like the following:

Code:

DeepStack URL and Port: "192.168.99.100:80" (format: "url: port", example: "192.168.99.100:80")

Trigger URL: "http://192.168.1.133:80/admin?trigger&camera=frontyard&user=admin&pw=secretpassword" (format: "url", example: "http://192.168.1.133:80/admin?trigger&camera=frontyard&user=admin&pw=secretpassword")

Relevant objects: "person, bicycle" (format: "object, object, ...", options: see below, example: "person, bicycle, car")

Input path: "C:/BlueIris/New/" (example: "C:/BlueIris/New/")

Output path: "C:/BlueIris/AIDetections/" (empty to disable saving cutouts with detected objects, example: "C:/BlueIris/AIDetections/")

Continue after detection of relevant object: "no" (options: "yes" or "no", explanation: if the first image with relevant objects is detected, analyse the remaining images aswell?)

Input file begins with: "" (only analyze images which names start with this text, leave empty to disable the feature, example: "backyardcam")

Start delay: "" (input how many seconds the program shall wait before starting, example: "3")

Telegram option (leave empty to disable):

Telegram Token: ""

Telegram Chat ID: ""

possible trigger objects: person, bicycle, car, motorcycle, airplane,bus, train, truck, boat, traffic light, fire hydrant, stop_sign,parking meter, bench, bird, cat, dog, horse, sheep, cow, elephant,bear, zebra, giraffe, backpack, umbrella, handbag, tie, suitcase,frisbee, skis, snowboard, sports ball, kite, baseball bat, baseball glove,skateboard, surfboard, tennis racket, bottle, wine glass, cup, fork,knife, spoon, bowl, banana, apple, sandwich, orange, broccoli, carrot,hot dog, pizza, donot, cake, chair, couch, potted plant, bed, dining table,toilet, tv, laptop, mouse, remote, keyboard, cell phone, microwave,oven, toaster, sink, refrigerator, book, clock, vase, scissors, teddy bear, hair dryer, toothbrushPlease read through the explanations and modify all parameters according to your needs and save the file. When setting up your configuration, please mind the following information:

Input path: The input path is where Blue Iris stores the alert images. We can already add this path to Blue Iris by opening the settings of Blue Iris, then 'Clips and archiving', then click on one of aux folders in the list on the left (if you click on p.e. 'Aux_7' and don't move the cursor for 1-2s, you will be able to change the displayed name) and then set it to the input path we also specified in the config.txt .

If we want the input path to be a subfolder of the folder where the program is located, we can use a relative path, p.e. "./input/" would create a new folder 'input' in the programs base directory.

Output path: If we don't specify an output folder, the program won't store the image cutouts containing detected objects (saves resources). The output path can be a relative path just like the input path.

Relevant objects: Please note that despite that fact that it is written, trigger objects containing multiple word (like 'fire hydrant') probably wont work due to the way the config file is processed by the program. It's the first actual program I wrote, so please excuse that.

Input file begins with: Blue Iris will store the alert images under names that start with the camera name, so p.e. 'aifrontyard.20180326_054241.0.64.jpg'. If we store the alert images of multiple cameras in that folder, we can filter the images from the duplicated camera out by setting the option 'Input file begins with:' to the name of our duplicated camera, in this case 'aifrontyard'.

Start delay: It makes sense to set 'Start delay' to maybe 2-5s, because BI needs a short time to save the images. If we set Blue Iris to make e.g. a snapshot every second for the next 5s after an alert, we furthermore profit from the fact, that after the program has analyzed the first images, it will check if new images are in the folder that were saved while the program was analyzing. Unless configured otherwise, it will analyze the new images aswell.

Continue after detection of relevant object: This option is quite relevant. If we just want to get an alert when something is detected, "no" is a good configuration. But this also means, that as soon as the first person is detected, the program will call the alert URL and then stop analyzing the remaining images (less CPU-heavy). So if we want to be able to see ALL objects cutouts from ALL alert images in the output path, we will want to set this feature to "yes".

Trigger URL(s): If we don't specify one or multiple 'Trigger URL(s)', no trigger call will be made. If we want to call multiple urls, we have to seperate the urls with commas. Every url has to start with ''. Meanwhile, the trigger URL is not limited to Blue Iris, practically everything can be triggered that has such an URL that one can call for a trigger.

Alerting Blue Iris with the Trigger URL: To use the trigger url, you have to do the following in Blue Iris:

1. go to Setting->Webserver->Advanced and disable 'use secure session keys and login page'.

2. go to Settings->Users and eighter select a user and copy the password, or create a new administrator user.

3. Open the camera aproperties of the camera you want to to the AI analysis on and under General, copy the short camera name(p.e. 'frontyard').

4. Take the following url and input blue iris IP, user, password and short cam name: [Blue Iris IP]:80/admin?trigger&camera=[shart cam name]&user=[user]&pw=[password] .

If you filled in everything, copy/paste the whole url into your webbrowser and make sure it causes an alert.

Telegram option: Leave this empty for now, if you would like to receive Telegram notifications later, then you can do it after getting everything to run properly.

Restore config.txt: If you accidently messed the config.txt up, just remove it and run testAI.exe once, it will recreate a working template.

A configuration might, for example, look like this:

Code:

DeepStack URL and Port: "192.168.99.100:80" (format: "url: port", example: "192.168.99.100:80")

Trigger URL: "http://192.168.1.133:80/admin?trigger&camera=frontyard&user=admin&pw=secretpassword" (format: "url", example: "http://192.168.1.133:80/admin?trigger&camera=frontyard&user=admin&pw=secretpassword")

Relevant objects: "person, bicycle, car, truck" (format: "object, object, ...", options: see below, example: "person, bicycle, car")

Input path: "C:/BlueIris/New/" (example: "C:/BlueIris/New/")

Output path: "C:/BlueIris/AIDetections/" (empty to disable saving cutouts with detected objects, example: "C:/BlueIris/AIDetections/")

Continue after detection of relevant object: "no" (options: "yes" or "no", explanation: if the first image with relevant objects is detected, analyse the remaining images aswell?)

Input file begins with: "aifrontyard" (only analyze images which names start with this text, leave empty to disable the feature, example: "backyardcam")

Start delay: "3" (input how many seconds the program shall wait before starting, example: "3")

Telegram option (leave empty to disable):

Telegram Token: ""

Telegram Chat ID: ""

possible trigger objects: person, bicycle, car, motorcycle, airplane,bus, train, truck, boat, traffic light, fire hydrant, stop_sign,parking meter, bench, bird, cat, dog, horse, sheep, cow, elephant,bear, zebra, giraffe, backpack, umbrella, handbag, tie, suitcase,frisbee, skis, snowboard, sports ball, kite, baseball bat, baseball glove,skateboard, surfboard, tennis racket, bottle, wine glass, cup, fork,knife, spoon, bowl, banana, apple, sandwich, orange, broccoli, carrot,hot dog, pizza, donot, cake, chair, couch, potted plant, bed, dining table,toilet, tv, laptop, mouse, remote, keyboard, cell phone, microwave,oven, toaster, sink, refrigerator, book, clock, vase, scissors, teddy bear, hair dryer, toothbrush3. Configure Blue Iris

I anticipate you are familiar with Blue Iris, so I keep the description simple.

3.1 Firstly we have to create a camera whose only purpose is to start the AI detection program when a motion is detected. So add a new camera, give it a name that makes sense (e.g. if your original camera was called 'frontyard', call it 'aifrontyard'), and under type select 'copy from another camera' and choose the appropriate one.

3.2 Keep in mind that this cameras only job is to detect motion and then start the AI program, so disable all features on this camera that are not needed (recording, pretrigger, etc). Because Blue Iris is already prepared to work with camera clones it is not neccessary to lower the resolution to save on CPU resources. Quite the opposite: If the camera stream url isn't changed, there will be zero additional CPU usage. Instead, changing the stream url to p.e. a profile with a lower resolution will cause additional CPU load.

3.3 now go to Record, check 'JPEG snapshot each (mm:ss)', select the folder you defined as the input folder in the AI detection program, check the box 'Only when triggered' and set the interval to p.e. 0:01.0 (one image every 1 second). Furthermore, you might want to disable 'Create Alert list images when triggered', because otherwise alot of false-alarm images (remember we set the motino detection to be very sensitive) will be stored in your alerts folder.

3.4 go to 'Trigger' and set the Break time 'End trigger unless retriggered' to p.e. 5s. While setting this value, remember the following: If you set the interval in step 3.3 to 1s, then 5s means, that 5 images are created and have to be analyzed one after the other.

3.5 last but not least go to 'Alerts', check 'run a program or execute a script', click 'configure' and select testAI.exe (in the attachment) as the file to run. For the initial phase of using the AI, it might be useful to set the window to 'normal' and not to 'hide', because this facilitates troubleshooting.

Now run around in front of your cameras or - if you are terribly lazy - right click on the new camera we created and select 'Trigger now'. After the AI program did the analysis, check the trigger clips of the original camera and the image cutouts in the output folder (well if you just clicked trigger now, you should not have images outputted and an alert because - hopefully - there is no one sneaking around on your ground, trying to steal your car. Otherwise get the shotgun and .... no no no that's a bad attitude If you are still to lazy to go out, you can take any jpg picture containing an object that you configured to trigger an alert, put it into the input folder and put the name of the camera (p.e. 'aifrontyard') in front of the image name. And then manually trigger the camera.

If everything works fine then you now have lowered your false detection rate significantly while enhancing the rate of correct detections.

The program can send trigger images using a Telegram bot. The program needs two strings to connect to Telegram, 1. the Telegram API key and 2. the chat-id of the chat between you and the bot.

4.1 To create a bot and get the api key:Bots: An introduction for developers

4.2 Now contact the bot you created with the telegram account you want to receive the notifications on.

4.3 Retrieve the chat-id: Telegram Bot - how to get a group chat id?

4.4 open the config.txt file of the AI program and input 'Telegram Token' and 'Telegram Chat ID'.

4.5 start the testAI.exe and check if under Options it is noted that Telegram notifications are enabled.

Attachments

-

Release AI Tool v0.2.zip2.3 MB · Views: 287

-

Release AI Tool v0.3.zip2.4 MB · Views: 193

-

Release AI Tool v0.5.zip2.4 MB · Views: 198

-

Release AI Tool v0.6.zip2.4 MB · Views: 253

-

Release AI Tool v1.43.zip2.6 MB · Views: 278

-

AI Tool 1.53.zip2.9 MB · Views: 231

-

AI Tool 1.54.zip2.9 MB · Views: 252

-

AI Tool 1.55.zip2.9 MB · Views: 232

-

AI Tool 1.56.zip2.9 MB · Views: 285

-

AI Tool 1.62.zip2.9 MB · Views: 212

-

AI Tool 1.63.zip2.9 MB · Views: 220

-

AI Tool 1.64.zip2.9 MB · Views: 458

-

AI Tool 1.65.zip2.5 MB · Views: 2,472

-

AI.Tool.1.67.zip2.6 MB · Views: 2,488

Last edited: